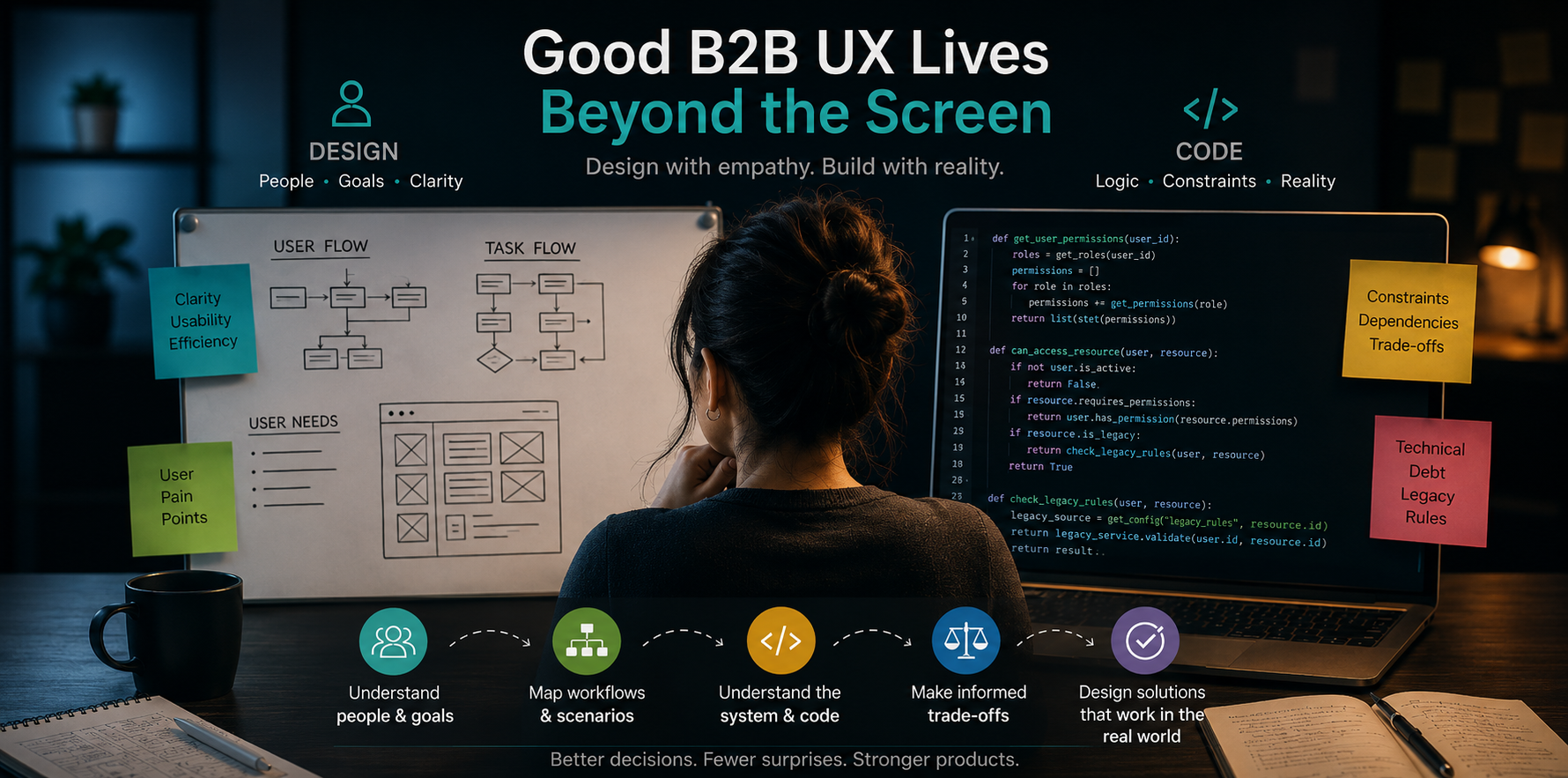

What looks like a screen problem is often a workflow, logic, or decision problem underneath

B2B products carry more than interface complexity.

They are workflows, permissions, integrations, approval chains, configuration rules, customer-specific logic, technical debt, and operational edge cases. What looks like a messy screen is often the visible layer of a much deeper product problem.

Good design matters - clear layouts, better language, stronger hierarchy, and simpler flows all improve the experience. The difference in B2B is that those improvements need to be grounded in how the product actually works, technically.

A flow can look strong in isolation, then development starts, and the team discovers that it does not fit the system - it ignores important business rules, creates maintenance issues, or assumes data that does not exist.

This is where technical UX thinking becomes valuable.

It helps teams connect user needs, product logic, business rules, and technical constraints while decisions are still flexible enough to change.

B2B UX starts beneath the screen

In many consumer-facing products, a confusing experience can often be improved by simplifying the interface, rewriting labels, removing steps, or making the path clearer.

In B2B products, the same problem usually has deeper roots.

A confusing form might be connected to pre-existing backend rules.

A missing option might be tied to permission logic.

A long workflow might exist because of compliance, approvals, or customer configuration requirements.

A clunky experience might reflect years of product decisions that were never revisited.

So the useful question is not only:

How do we make this easier to use?

It is also:

Why does it work this way in the first place?

That second question changes the quality of the work. I have seen flows that looked like UI problems at first but once I started asking where the data came from, what depended on it, and what the user was trying to complete, the issue became broader.

Sometimes the answer really was a better screen, in other occasions, however, it could only be fixed by a clearer product decision.

The real cost is rework

One of the biggest risks in B2B product teams is moving too far with unclear assumptions.

A feature gets defined.

A design gets produced.

Engineering starts asking questions.

Edge cases appear.

The scope changes.

The flow needs rethinking.

The team realises the original problem was not clear enough.

By that point, people have spent time discussing, designing, estimating, planning, and sometimes building around an assumption that should have been challenged earlier.

I have experienced this from both sides, on so many occasions.

As a developer, unclear product decisions often showed up as implementation questions. As a designer, I saw how early ambiguity could turn into design rework, delivery friction, or scope tension later.

That is why clarity before delivery matters and technical UX thinking can help teams find the gaps while the work is still flexible enough to change.

Simple is not always right

Designers are often trained to simplify and, most of the time, that is useful.

The danger appears when simplification removes complexity the product genuinely needs to handle. I worked on a B2B ITSM suite where designers instinctively oversimplified flows and interactions, not taking into account those actually delivered value to the users, due to the product's specific application and context.

A workflow may look too long, while some steps exist because different roles need different information.

A screen may look overloaded, while some details are essential for decision-making.

A process may feel repetitive, while repetition may reflect audit, compliance, or customer-specific requirements.

The real goal is to make the right complexity understandable.

When I worked on a mobile self-service product, one important product question was whether mobile should reproduce the desktop experience. From a delivery point of view, desktop parity felt safe - the patterns already existed, the features were known, the scope seemed easier to define. However, mobile users often need quicker access, shorter flows, clearer prioritisation, and fewer assumptions carried over from desktop. The design challenge was to understand what mobile self-service should actually be responsible for.

That was a technical UX question as much as a design question. It involved context, behaviour, constraints, knowledge of the underlying architecture of the software, effort, and value.

B2B users need clarity more than decoration

Most B2B users are trying to get work done. They may need to log a request, approve something, resolve an issue, find information, check progress, update a record, or make a decision that affects another team.

In that context, UX quality is practical.

Can the user understand what to do next?

Can they trust the information?

Can they avoid mistakes?

Can they recover when something goes wrong?

Can they complete the task without needing support?

Can they tell what has happened and what still needs action?

Error states, permissions, system feedback, empty states, validation rules, loading behaviour, and edge cases all shape the experience. They may not be the most visible parts of design, but in B2B products, they often determine whether the product feels reliable or frustrating.

A polished interface with weak system behaviour will still feel broken.

Faster workflows need clearer direction

AI has become part of everyday product work - teams can now draft requirements, summarise research, generate ideas, and turn rough input into structured artefacts much faster. That speed is useful, but it also makes clarity more important. In B2B products, a polished artefact can still carry weak assumptions, a generated flow can still miss a business rule, an edge case, or a technical constraint.

Technical UX is really about decision quality

Technical UX thinking makes product decisions stronger because it connects the interface to the system behind it.

In B2B products, design decisions rarely affect one single screen. They carry impact on the existing codebase/architecture, legacy workflows, backend constraints, integrations, permissions, data models, maintainability, engineering effort, delivery timelines, support burden, and long-term product direction.

That means UX has to stay connected to how the product is actually built.

The best B2B UX work asks:

What is the user trying to achieve?

What does the business need?

What does the current architecture allow?

Which parts of the system are flexible?

Which constraints come from legacy requirements?

What would be expensive or risky to change?

What dependencies would this design introduce?

What should be redesigned, and what should stay stable?

What decision will still make sense six months from now?

Those are technical questions, product questions, and UX questions at the same time.

This is also where evidence matters. Customer conversations, support tickets, usage patterns, adoption data, app store signals, and feedback from engineering or support teams can help teams move beyond opinion.

In my own work, introducing product measurement helped shift the conversation from what we thought was happening to what we could actually observe. But just as importantly, understanding the existing product architecture helped make those decisions realistic. It made the UX work less about ideal screens in isolation, and more about changes that could actually hold up inside the product.

Final thoughts

B2B products need more than clean interfaces. They need UX thinking that understands both the experience on the surface and the infrastructure behind it.

That combination matters because design decisions do not live in isolation - they have to work for the user, support the product goal, respect the existing architecture, and make sense within the system that already exists.

In complex products, the strongest UX is rarely just the simplest-looking screen. It is the solution that makes the right complexity clear for the user, while also being realistic, maintainable, and worth building in the codebase behind it.